Jana Lasser | RWTH Aachen | Complexity Science Hub Vienna

It's a team effort!

Outline

Study 1: How trustworthy is the information shared by political elites on social media platforms?

Article "Social media sharing of low quality news sources by political elites", Lasser et al. 2022, in PNAS nexus.

Study 2: Can the sharing of untrustworthy information be explained by a changing "ontology of truth"?

Article "From Alternative conceptions of honesty to alternative facts in communications by U.S. politicians", Lasser et al. 2023, Nature Human Behaviour.

Political elites have agenda-setting powers [1, 2].

Relatively little attention to information sharing practices of politicians in the literature [3].

Aim: Quantify the trustworthiness of information shared by politicians on social media.

[1] Chong D., Druckman JN., A theory of framing and opinion formation in competitive elite environments, Journal of Communication (2017).

[2] Lewandowsky S., Jetter M., Ecker UKH., Using the president’s tweets to understand political diversion in the age of social media, Nature Communications (2020).

[3] Mosleh M., Rand D., Measuring exposure to misinformation from political elites on Twitter, Nat. Comm. (2022).

RQ 1: How does the trustworthiness of the information shared on social media differ between parties?

RQ 2: How does the trustworthiness of the shared information differ over time?

(1) Collect tweets by politicians in three major western democracies.

U.S: two-party system, negiglible pubic broadcasting

U.K: (almost) two-party system, one public broadcaster

Germany: many-party system, multiple public broadcasters

(2) Extract all links from the posts. Follow shortened links.

(3) Assess the trustworthiness of the information that was linked to.

We assess trustworthiness of the domain using journalistic criteria – not of the content (articles) themselves.

Validation with independently compiled super-list of academic lists and fact-checking sites.

(-) Does not repeatedly publish false content (22)

(-) Gathers and presents information responsibly (18)

(-) Regularly corrects or clarifies errors (12.5)

(-) Handles the difference between news and opinion responsibly (12.5)

(-) Avoids deceptive headlines (10)

(-) Website discloses ownership and financing (7.5)

(-) Clearly labels advertising (7.5)

(-) Reveals who is in charge, including any possible conflicts of interest (5)

(-) The site provides names of content creators, along with contact/bio info (5)

See also NewsGuard's rating criteria.

| Domain | Score |

|---|---|

| nytimes.com | 100 |

| foxnews.com | 57 |

| beforeitsnews.com | 0 |

NewsGuard is not a blacklist.

Manual validation of all domains that contribute > 0.1% of link volume shows that no major news sites are missing.

Parties to the right overall share more links to untrustworthy sites (< 60 points).

Trustworthiness of sources stays relatively constant across parties.

Trustworthiness of sources stays relatively constant across parties.

Trustworthiness of sources shared by Republican Congress Members drops.

RQ 1: Parties on the right share more links to untrustworthy sites in the U.S., U.K. and Germany.

RQ 2: The steep drop in trustworthiness of links shared by Republicans is unique to the U.S.

See "Social media sharing of low quality news sources by political elites", Lasser et al. 2022, PNAS nexus.

Two components of "honesty"

Fact-speaking: search for accurate information and updating of beliefs based on that information.

Belief-speaking: relates to speaker's beliefs and feelings, without regard to factual accuracy.

RQ 1: Can we identify belief-speaking and fact-speaking in public-facing statements by politicians?

RQ 2: How does the usage of the two honesty components in public-facing statements differ between parties and over time?

RQ 3: Is there a link between honesty components and the trustworthiness of information?

Distributed Dictionary Representation (DDR) approach [1]:

(1) Create a dictionary of words for each honesty component

(2a) Embed each word in the dictionary to obtain a vector representation & average all words.

(2b) Embed each word in the document of interest & average all words.

(3) Calculate the cosine similarity between the averaged embeddings.

[1] Garten, J. et al.,"Dictionaries and distributions: Combining expert knowledge and large scale textual data content analysis" in Behaviour Research Methods, 2018.

[1] Di Natale. et al.,"LEXpander: applying colexification networks to automated lexicon expansion" in arXiv, 2022.

Dictionaries available at https://osf.io/mq6kz.

We now have measurement instruments that allow us to measure belief-speaking similarity Db and fact-speaking similarity Df in texts.

Db < 0 means a text is dissimilar to belief-speaking.

Db > 0 means a text is similar to belief-speaking.

Df < 0 means a text is dissimilar to fact-speaking.

Df > 0 means a text is similar to fact-speaking.

Produced with scattertext https://github.com/JasonKessler/scattertext.

Produced with scattertext https://github.com/JasonKessler/scattertext.

Both belief-speaking and fact-speaking increased after Trump's election [1].

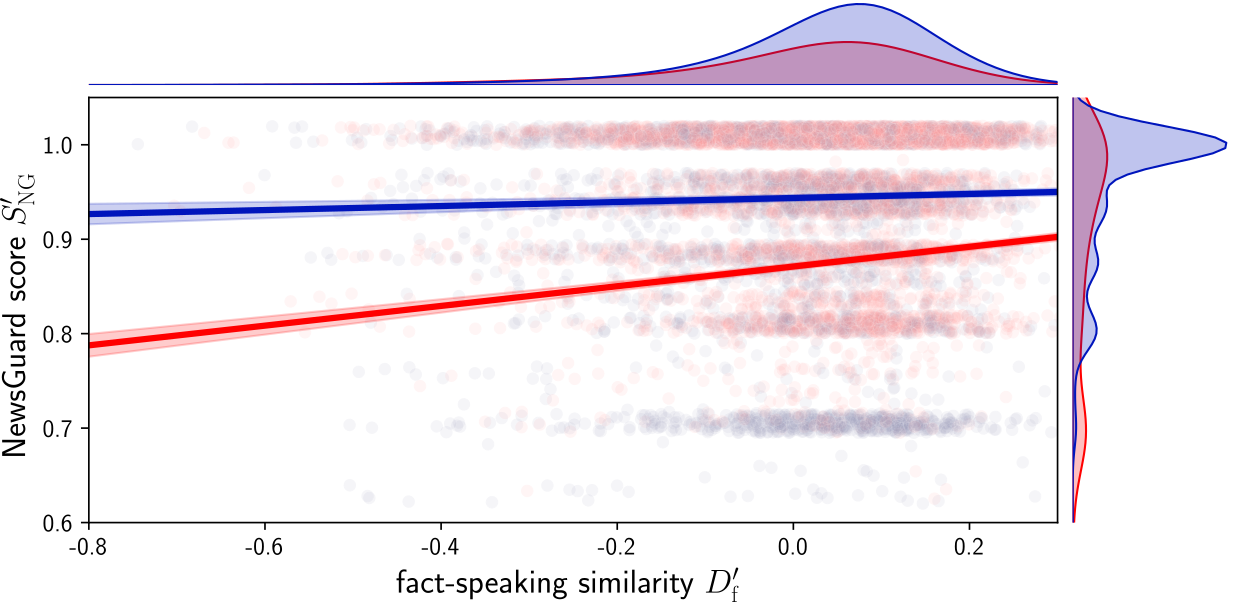

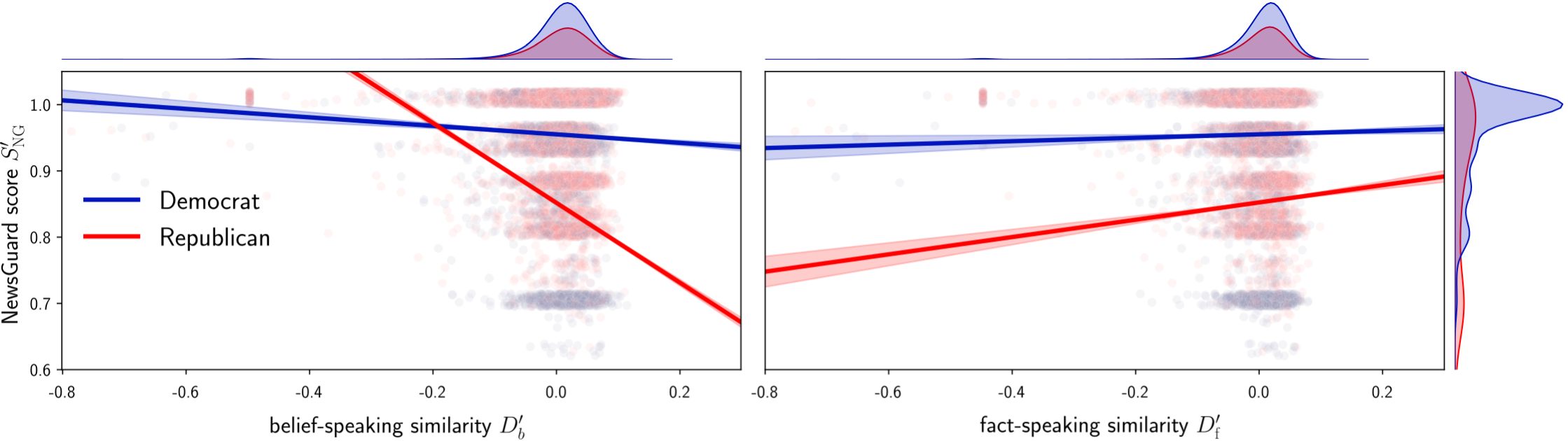

How do the honesty components relate to sharing of untrustworthy information?

Linear Mixed Effects model with random slopes and intercepts for every Twitter account (user ID) and interaction terms.

An increase in belief-speaking similarity of tweets of 10% predicts a drop in average NewsGuard score of 12.8 points for Republicans.

An increase in fact-speaking similarity of tweets of 10% predicts an increase in average NG score of 10.6 points for Republicans and 2.1 points for Democrats.

We collect the text of 154,000 news articles linked in the tweets.

We confirm the effects and also find a relationship for belief-speaking and Democrats.

RQ 1: We develop a new instrument to measure the honesty components belief-speaking and fact-speaking in political speech using DDR.

RQ 2: There is significant variance of honesty components over time and between parties.

RQ 3a: There is a strong negative correlation between belief-speaking and untrustworthy information for Republicans.

RQ 3b: There is a strong positive correlation between fact-speaking and trustworthy information for both parties.

"From Alternative conceptions of honesty to alternative facts in communications by U.S. politicians", Lasser et al., Nat. Human. Behav. (2023).

(-) Both Democrats and Republicans increasingly use both belief-speaking and fact-speaking in public-facing communication on social media.

(-) Republicans increasingly share untrustworthy information, Democrats do not.

(-) Belief-speaking and low information trustworthiness are strongly correlated mostly for Republicans.

Belief-speaking might be necessary but not sufficient to engage in sharing of untrustworthy information.